Chip Measuring Contest

The benefits of purpose-built chips.

The benefits of purpose-built chips.

Time to move forward from decades-old design.

It's important to know where your power comes from.

A closer look at the technology that makes portable electronics possible.

From thingamabobs to rockets, 3D printing takes many forms.

Be kind and rewind.

Reducing datacenter carbon footprints.

Most modern CPUs are just chiplets connected together.

The hardware root of trust.

If the CPU is the brain of the board, the BMC is the brain stem.

Step into the world behind the kernel.

Securely running processes that require the entire syscall interface.

Some of my favorite books.

A list of technical talks I have given.

DUM-E (“dummy”) and U (“you”) are the names of the robot arms in the Iron Man movies. After watching this movie for the n-teenth time, I have a strong urge to also have robotic arms in a workshop like Tony Stark. You can see the value of the robots clearly throughout the movie. The robots allow Tony to produce suits more quickly, help test the suits, and provide periodic comedic relief.

Tesla had its first Battery Day on September 22nd, 20201. What a fantastic world we live in that we can witness the first Apple-like keynote for batteries. Batteries are a part of our everyday life; without them, the world would be a much different place. Your cellphone, flashlight, tablet, laptops, drones, cars, and other devices would not be portable and operational without batteries. At the heart of it, batteries store chemical energy and convert it into electrical energy.

I previously wrote a bit about our internal infrastructure in my post on The Art of Automation. This post is going to go into details about our automated Chief infrastructure Officer (CIO). I joke so much that I automated our CIO that I even named the repo holding the code… cio. I took the time this weekend to finally clean up some of this code. Previously, our infrastructure was held together with bash, popsicle sticks, glue, and some rust.

I have wanted a 3D printer for a very long time. I hope you can tell from my ACM Queue column that I like to do a lot of research and I tend to want the best thing. I had been keeping my eyes on the 3D printer product space for quite some time. This article is going to go over the technical details behind 3D printing as well as my experience with two different products.

My mom has a tendency to buy these really terribly spec’d Windows machines. She’s been doing it for as long as I’ve been alive. I was surprised when on one of our latest Zoom calls she said “You know what, I’m beginning to think that size matters.” I’ve only been telling her this for years! Here’s the problem. There are a bunch of shitty Windows machines you can buy that cost around $400 dollars and have something like 4GB RAM.

Being cooped up at home got me looking into the new Xbox and PlayStation 5. I was curious about the innovations in the consoles since their successors. Both claim to have ray tracing and support for 8K graphics. This then got me thinking about how prevalent 8K televisions are today. 8K televisions seem to be in the same state as 4K televisions a few years ago. One thing I know through my life is that pixel density will continue to get bigger and bigger.

I am unsure if my love of automation comes from a dislike of doing the same thing twice or an overall desire to be more productive and make everything more efficient. Like a lot of programmers, I often ask myself “can this be scripted” when I find myself doing a manual task. I was inspired recently by reading Wolfram’s writing on his personal infrastructure for productivity1. I, too, have written about my personal infrastructure2, but not at the level of depth or with the same focus on productivity as Wolfram.

A byte of data has been stored in a number of different ways as newer, better, and faster mediums of storage are introduced. A byte is a unit of digital information that most commonly refers to eight bits. A bit is a unit of information that can be expressed as 0 or 1, representing logical state. In the case of paper cards, a bit was stored as the presence or absence of a hole in the card at a specific place.

When you upload photos to Instagram, back up your phone to “the cloud”, send an email through GMail, or save a document in a storage application like Dropbox or Google Drive, your data is being saved in a data center. These data centers are airplane hangar-sized warehouses, packed to the brim with racks of servers and cooling mechanisms. Depending on the application you are using you are likely hitting one of Facebook’s, Google’s, Amazon’s, or Microsoft’s data centers.

I had a lot of fun writing blog posts in the past about my home lab and some of my personal infrastructure so I thought I would do the same as we built out our office. Much like moving into a new place, the first thing I always plan to have setup on move-in day is internet. We did the same with our office as well. Before we even had any real furniture, we made sure that we had a network connection.

WE STARTED A COMPUTER COMPANY!! You have no idea how long I’ve been waiting to say that! I guess some context would help… Steve Tuck, Bryan Cantrill, and I officially started the Oxide Computer Company. Since then, we’ve been working on closing up fundraising, getting an awesome office, and hiring! You are probably thinking “a computer company? that’s outrageous!”.. well it is and it isn’t. Over the last year, I had the opportunity to spend a lot of time talking with folks who are currently running workloads on premises.

Last week I attended the Open Source Firmware Conference. It was amazing! The talks, people, and overall feel of the conference really left me feeling inspired and lucky to attend. Having been pushed to attend vendor conferences and trade shows through my career for various jobs, it was so refreshing to have the chance to hang out with folks from such a genuine community that really just want to help one another.

At lunch today I learned about Transactional Synchronization Extensions (TSX) which is an implementation of transactional memory. The conversation started as a rant about why transactional memory is bad but then it evolved into how this concept even came to be and how it even got implemented if it’s such a terrible idea. What is transactional memory? First let’s start by going over what transactional memory is. You might be familiar with a deadlock.

Hello! I thought it would be fun to write a post aimed towards business leaders making technology decisions for their organizations. There is a lot of hype in our field and little truth behind the hype. Like most things I write about, this started from an idea I had on Twitter: has anyone ever done technical breakdowns of these products in Gartner reports that are actually just trash, is this something you'd read.

Below is the foreward for the new book on Linux Observability with BPF by two of my favorite programmers, David Calavera and Lorenzo Fontana! I was pretty stoked about getting to write the foreward, I asked O’Reilly if I could publish it on my blog as well and they said yes. I hope you all check out this book and share what you’ve built after! As a programmer (and a self confessed dweeb) I like to stay up to date on the latest additions to various kernels and research in computing.

“Can I get an encore, do you want more” - Jay-Z I recently read Ben Horowitz’s book, The Hard Thing about Hard Things. It’s really eye opening and creates a level of empathy in the reader for leaders that make hard decisions every day. It covers everything from how to know your company is toxic to how to do layoffs. Ben starts each chapter with a rap quote so as did I above ;) obviously I chose Jay-Z but I also love Tupac, as is shown by my first blog post ever.

I gave a talk recently at GoTo Chicago on Why open source firmware is important and I thought it would be nice to also write a blog post with my findings. This post will focus on why open source firmware is important for security. Privilege Levels In your typical “stack” today you have the various levels of privileges. Ring 3 - Userspace: has the least amount of privileges, short of there being a sandbox in userspace that is restricted further.

Last week, I had the pleasure of meeting with the Transposit team in San Francisco. Tech is a super small world and it turns out the two founders and I are separated by one-degree through several different people we know. In meeting them I closed many loops without even realizing it, but I digress… Their product is really cool, it exposes a SQL interface for interacting with numerous APIs at once.

I came up with a list of questions I would ask my cloud provider if I was buying a product. They are as follows: 1. What problem is this solving? I would ask this to make sure I even need this product. So many people tend to buy into the hype for “shiny”, they miss if they even needed the thing in the first place. 2. How did you implement this?

This post is co-authored by Kathy Simpson. “understanding the true nature of instinctive decision making requires us to be forgiving of those people trapped in circumstances where good judgment is imperiled.” ― Malcolm Gladwell, Blink: The Power of Thinking Without Thinking As leaders, setting up a structure that helps us navigate decisions under pressure is of the utmost importance. When writing and delivering software we rely on our continuous integration (CI) infrastructure and test suites to tell us when a test is failing and code should not be merged.

Last week I got to see what it was like to be an investigative journalist for a day. It was thrilling. I will get into what I learned but first I waned to give some background on why I was doing this. I have a general curiosity for people. It’s interesting to me to uncover what people are motivated by. Humans are individual snowflakes and no one is exactly like the next.

I’ve been talking to a lot of people in different layers of the stack during my funemployment. I wanted to share one of the problems I’ve been thinking about and maybe you can think of some clever solutions to solve it. Conway’s Law states “organizations which design systems … are constrained to produce designs which are copies of the communication structures of these organizations.” If you were to apply Conway’s Law to all the layers of the software stack and open source software you’d see a problem: There is not sufficient communication between the various layers of software.

I recently have started researching and playing around with RISC-V for fun. I thought it might be nice to combine some of what I’ve learned into a blog post. However, I don’t just want to highlight what I learned. I want to use this as an example of how to go about learning something new. Recently, Erik St. Martin, Shubheksha Jalan, and I were discussing how we learn new things and we all thought it might be beneficial to have a way to document this process for others.

I learned a lot about myself and the way big companies are organized over the past year or so. I had mentioned a bit in a previous blog post and podcast about “the N + 1 shithead problem” (from Bryan Cantrill’s talk on leadership). To reiterate, the “N +1 shithead problem” occurs when you are demotivated by seeing people who are a level above you behave poorly, or more bluntly when they behave like a shithead.

I have written a bit about how I am spending my time while being unemployed and I thought I would continue. There was one thing I had left out of my previous post on my visit to the Pentagon. THEY HAVE A REAL ENIGMA MACHINE THERE. Okay, moving on… QCon and University of Cambridge I gave a talk at QCon on SGX and ended up giving the same talk to some really awesome folks at University of Cambridge.

I stated in my first post on my reflections of leadership in other industries that I would write a follow up post after having hung out in the world of finance for a day. This is pretty easy to do when you live in NYC. Originally for college, I was a finance major at NYU Stern School of Business before transferring out, so I have always had a bit of affinity for it.

I’ve had a bit of a crazy week. Tuesday, I got a tour of the Pentagon from a friend that is in the US Digital Service (USDS) for the Department of Defense (DoD), called the Defense Digital Service (DDS). Wednesday (the day of writing this), I shadowed a friend who is a surgical resident during their shift in a hospital. Friday, I have plans to shadow a friend who is an investment banker at a private equity firm and will do a follow up post.

A few of you, thank you, have reached out to me saying that you love my writing style. It means a lot to me because I like to think that I write how I speak. This was not always taken well, however. I tend to be a bit of a sarcastic troll. The following post is meant to show others who may be like me and hesitant towards their writing style due to feedback they’ve gotten.

I like to consider all the variables in a problem space before coming to a conclusion. As humans we have a tendency to jump to conclusions rather quickly. I try not to do this but everyone makes mistakes. More information about Intel SGX was brought to my attention after my initial blog post on it. I’d like to take the time to go through that information and my current thoughts on the technology after having this extended context.

I’m a huge, HUGE, fan of LD_PRELOAD let me tell you… oh wait it’s my blog so I’m going to. Where do I begin… About three years ago, I wrote a blog post about the 10 LDFLAGS I love. After writing the post, I realized I should have made the number odd because I think that is part of BuzzFeed’s “click algorithm.” But more seriously, I realized just how many people on the internet you can upset when you don’t include LD_PRELOAD in your favorite LDFLAGS post.

From the Intel x86 Manual: In the mid-1960s, Intel cofounder and Chairman Emeritus Gordon Moore had this observation: “… the number of transistors that would be incorporated on a silicon die would double every 18 months for the next several years.” Over the past three and half decades, this prediction known as “Moore’s Law” has continued to hold true. Moore’s Law is coming up a lot lately in the context of coming to an end.

I started dipping into some firmware and hardware things on my vacation and unemployment and I figured I would take you down my journey as well. Baseboard management controller The first thing I dipped into was openbmc. This is pretty cool. At face value it has support for a lot of different boards. It uses IPMI (Intelligent Platform Management Interface) to perform tasks for monitoring and operating the components of a computer.

I thought it would be fun to start a blog post series containing design docs from my personal archive that never saw the light of day. This will be the first of the series. It contains what I thought about in detail for a general multi-tenant secured container orchestrator. The use case would be for running third party code securely isolated from each other. If you would like to see this in google doc form it also lives here.

My top used shell command is |. This is called a pipe. In brief, the | allows for the output of one program (on the left) to become the input of another program (on the right). It is a way of connecting two commands together. For example, if I were to run the following: echo "hello" I get the output hello. But if I run: echo "hello" | figlet The figlet program, changes the letters in hello to look all bubbly and cartoony.

I thought it might be fun to write a blog post on “The Life of a GitHub Action.” When you go through orientation at Google they walk you through “The Life of a Query” and it was one of my favorite things. So I am re-applying the same for a GitHub Action. For those unfamiliar Actions was a feature launched at GitHub’s conference Universe last year. You can sign up for the beta here.

I have realized recently that a lot of people think I am just a shill for Kubernetes and I am not. What I have done is write a few blog posts on some interesting problems to be solved in Kubernetes. But I would like to emphasize that those problems are pretty exclusive to the way Kubernetes was designed and you could easily build your own orchestrator without them. Use Containerd If you need an example of a custom, minimal orchestrator with containerd you should checkout stellar.

Wireguard is the hip, new way to VPN :P No, but seriously I wanted to try it out because it is super interesting and I think the direction it is going is awesome. Read about it on their website if you have not already. What is cool about Wireguard is it integrates into the Linux networking stack so you have a lot of power over interactions with it. In other words, it is very easy to clone the interface into specific containers.

There seems to be some confusion around sandboxing containers as of late, mostly because of the recent launch of gvisor. Before I get into the body of this post I would like to make one thing clear. I have no problem with gvisor itself. I think it is very technically “cool.” I do have a problem with the messaging around it and marketing. There is a large amount of ignorance towards the existing defaults to make containers secure.

EDIT: See my post on a design doc for a multi-tenant orchestrator instead. I wrote this when an internal requirement was to use Kubernetes but I do not personally think you should use Kubernetes for this use case. Kubernetes is the new kernel. We can refer to it as a “cluster kernel” versus the typical operating system kernel. This means a lot of great things for users trying to deploy applications.

A lot of people seem to want to be able to build container images in Kubernetes without mounting in the docker socket or doing anything to compromise the security of their cluster. This all was brought to my attention when my awesome coworker at Gabe Monroy and I were chatting with Michelle Noorali over pizza at Kubecon in Austin last December. Here is pretty much how it went down:

This is a story about how I got nerd sniped by a blog post from Cloudflare Engineering. The TLDR on their post is that you can script in Go if you use BINFMT_MISC in the kernel. BINFMT_MISC is really well documented and awesome. In the end, all they had to do to script in Go was to mount the filesystem: $ mount binfmt_misc -t binfmt_misc /proc/sys/fs/binfmt_misc Then, register the Go script binary format:

This post is kind of like “part two” on my series on all the weird things I do for my personal infrastructure. If you missed “part one”, you should check out Home Lab is the Dopest Lab. I run a lot of little things to make my life easier, like a CI, some bots, and a bunch of services just for the lolz. This post will go over all of those.

I always have some random side project I am working on, whether it is making the world’s most over engineered desktop OS all running in containers or updating all my Makefiles to be the definition of glittering beauty. This post is going to go over I how I recently redid all my home networking and ultimately how I got to here: ssh-ed into my dev NUC from a Pixelbook 39,000 feet, authenticated from an ssh key on a yubikey, the future is dope AF

I recently started a job at Microsoft. In my first week I have already learned so much about Windows, I figured I would try to put it all into writing. This post is coming to you from a Windows Subsystem for Linux console! I'm headed to Seattle because I'M JOINING MICROSOFT, at the airport wearing this awesome shirt from @listonb & @Taylorb_msft ���� pic.twitter.com/8rnAg1dsPd — jessie frazelle (@jessfraz) September 4, 2017

I recently gave a talk at DevOps Days (slides) and it had a pretty great response. I’m still pretty care-mad about the topics it covered so I figured I would turn some key points from it into a blog post. The overall outline of the talk covered the past, present, and future of usable security. Let’s start with the past. The Past A lot of the security tooling of the past (that we still use today) require users to jump through a lot of hoops or learn a hard to grok interface.

If you are new to my blog then you might be new to the concept of Linux kernel namespaces. I suggest first reading Getting Towards Real Sandbox Containers and Setting the Record Straight: containers vs. Zones vs. Jails vs. VMs. Linux namespaces are one of the primitives that make up what is known as a “container.” They control what a process can see. Cgroups, the other main ingredient of “containers”, control what a process can use.

I’m tired of having the same conversation over and over again with people so I figured I would put it into a blog post. Many people ask me if I have tried or what I think of Solaris Zones / BSD Jails. The answer is simply: I have tried them and I definitely like them. The conversation then heads towards them telling me how Zones and Jails are far superior to containers and that I should basically just give up with Linux containers and use VMs.

Over the past couple of years I have set out to create the ultimate Linux on the desktop experience for myself. Obviously everyone who runs Linux has their own opinions on things. What this post will outline is my ultimate Linux on the desktop experience. So just remember that before you get your panties in a knot on HackerNews because you live and die by Xmonad (I live and die by i3, fight me).

It all started innocently enough. I had “jfrazelle” as my GitHub handle for years, but my Twitter, IRC and other handles are all “jessfraz”. No one on GitHub was actually using “jessfraz” so I sat on it waiting to make my move. I’m currently on vacation this week so of course I was looking to break all the things. One thing you must know about me is that at no point was I thinking I hate this.

Last week, I gave a talk at Github Universe and afterwards several people suggested I write a blog post on it. Here it is. This post will cover intricacies of “choosing your battle” and how personal passion for a project might conflict with corporate motives. I have experienced open source from the side of the contributor, the side of the maintainer, and the side of the corporate-backed maintainer and contributor.

I was inspired last night by Cate Huston’s post, The Day I Leave the Tech Industry. I decided to write my own, except I’m not as eloquent a writer as Cate so before I go any further please, please, please read her post and not mine. Mine is going to be a bit different. Lately I’ve been thinking more and more about this. It seems imminent. I’m only 27 and let me repeat: it seems imminent.

I really enjoyed Felipe Hoffa’s post on Analyzing GitHub issues and comments with BigQuery . Which got me wondering about my favorite subject ever, The Art of Closing. I wonder what the stats are for the top 15 projects on GitHub in terms of pull requests opened vs. pull requests closed. This post will use the GitHub Archive dataset. Top 15 repositories with the most pull requests First let’s find the top 15 repos with the most pull requests from 2015.

This blog post is going to be a bit different. After watching Stranger Things, my friend and I started discussing scary movies from our childhood. I couldn’t help but remember a very specific strange thing that happened to me growing up. I thought, hey, this would be a kinda weird blog post. So here it is. The events following are factual. It was a hot, dry summer in July of 1995 in Phoenix, Arizona.

Hello and welcome to what will become the most sarcastic post on my blog. This is going to be a series of “buzzfeed” style programming articles and after this post I very happily pass the baton to Filippo Valsorda to continue. And I urge you to write your own as well. @jessfraz "We asked Jess for her top 10 ldflags; you won't believe what happened next" — adg (@enneff) July 17, 2016

Being an open source software maintainer is hard. The following post is geared towards maintainers and not contributors. If you are a new contributor to open source I would stop reading now because I don’t want you to get the wrong idea or discourage you. Tons of patch requests get merged per day, but this is going to focus on the ones that don’t. I’ve talked to maintainers from several different open source projects, mesos, kubernetes, chromium, and they all agree one of the hardest parts of being a maintainer is saying “No” to patches you don’t want.

Containers are all the rage right now. At the very core of containers are the same Linux primitives that are also used to create application sandboxes. The most common sandbox you may be familiar with is the Chrome sandbox. You can read in detail about the Chrome sandbox here: chromium.googlesource.com/chromium/src/+/master/docs/linux_sandboxing.md. The relevant aspect for this article is the fact it uses user namespaces and seccomp. Other deprecated features include AppArmor and SELinux.

Sup, let me give you fair warning here. Everything contained in this post is my opinion so don’t go getting your panties all in a knot on Hacker News because you don’t agree with me. I could honestly care less, because that’s the thing about my opinion, it’s mine. I am going to give you my honest and dare I say it “blunt” opinion about each of the Docker graphdrivers so you can decide for yourself which one is the best one for you.

This is so cool I can hardly stand it. In Docker 1.10, the awesome libnetwork team added the ability to specify a specific IP for a container. If you want to see the pull request it’s here: docker/docker#19001. I have a IP Block on OVH for my server with 16 extra public IPs. I totally use these for good and not for evil. But to use these previously with Docker containers meant hackery with the awesome pipework.

Almost exactly a year ago, I wrote a post about running Docker Containers on the Desktop. Well it is a new year, and I have ended up converting all my docker containers to runc configs, so it’s the perfect time for a new blog post. For those of you unfamiliar with the Open Container Initiative you should check out opencontainers.org. Why the switch? you ask… well let me explain.

If you weren’t aware user namepace support was added to Docker awhile back in the “Experimental” builds. But with the upcoming release of Docker Engine 1.10.0, Phil Estes is working on moving it into stable. Now this is all super exciting and blah blah blah, but what I am going to talk about today is how I started running all the containers from my Docker Containers on the Desktop with the new user namespace support.

In case you missed it, we recently merged a default seccomp profile for Docker containers. I urge you to try out the default seccomp profile, mostly so we can rest easy knowing the defaults are sane and your containers work as before. You can download the master version of Docker Engine from master.dockerproject.org or experimental.docker.com. We even have a doc describing the syscalls we purposely block and security vulnerabilities the profile blocked.

Usually when you think of a VPN, you think of accessing an office network from somewhere outside the office. A reverse VPN is for exposing things from your home network into the public. Why? Well for one, you shouldn’t want to expose your home network to the world. There are a lot of risks in doing that. A reverse VPN allows you to securely control what you are exposing.

I went to a meetup recently where a talk was given by Cara Marie of the NCC Group. She talked about decompression bombs and the various compression algorithms that can create these malicious artifacts. You might be familiar with Russ Cox’s post Zip Files All The Way Down, which goes over self-reproducing zip files. However most programs will not decompress the files fromm his blog post recursively. Which just leaves us with the problem of the more sofisticated decompression bomb.

Okay so this is part 2.5 in my series of posts combining my two favorite things, Docker & Tor. If you are just starting here, to catch you up, the first post was “How to Route all Traffic through a Tor Docker container”. The second was on “Running a Tor relay with Docker”. I thought it only made sense to show how to set up a Tor socks5 proxy in a container, for routing some traffic through Tor; in contrast to the first post, where I explained how to route all your traffic.

This post is part two of what will be a three part series. If you missed it part one was How to Route Traffic through a Tor Docker container. I figured it was important, if you are going to be a tor user, to document how you can help the Tor community by hosting a Tor relay. And guess what? You can use Docker to do this! There are three types of relays you can host, a bridge relay, a middle relay, and an exit relay.

My least favorite topic in the world is ‘Women in Tech’, so I am going to make this short but I think it’s something that needs to be said. This industry is fucked. Ever since I started speaking at conferences and contributing to open source projects I have been endlessly harassed. I’ve gotten hundreds of private messages on IRC and emails about sex, rape, and death threats. People emailing me saying they jerked off to my conference talk video (you’re welcome btw) is mild in comparison to sending photoshopped pictures of me covered in blood.

So it turns out I’m pretty bad at vacation. I had this idea for a blog post and one thing lead to another and here we are… You probably know by now I hate installing things on my host. At my previous job we did a lot of work with using Python and R for data science. I still love plotting data with ggplot and my favorite R package, wes anderson color palette.

This blog post is going to explain how to route traffic on your host through a Tor Docker container. It’s actually a lot simplier than you would think. But it involves dealing with some unsavory things such as iptables. Run the Image I have a fork of the tor source code and a branch with a Dockerfile. I have submitted upstream… we will see if they take it. The final result is the image jess/tor, but you can easily build locally from my repo jessfraz/tor.

This is a tale about how we use Docker to test Docker. Yes, I am familiar with the meme. Puhlease. Many of you are familiar with the fact I work on the Docker core team. Which consists of fixing bugs, doing releases, reviewing PRs, hanging out on IRC, mailing lists etc etc etc. But what you may not know is that in addition to all these things I also manage our testing infrastructure.

Hello!

If you are not familiar with Docker, it is the popular open source container engine.

Most people use Docker for containing applications to deploy into production or for building their applications in a contained environment. This is all fine & dandy, and saves developers & ops engineers huge headaches, but I like to use Docker in a not-so-typical way.

I use Docker to run all the desktop apps on my computers.

Hello!

This blog post is going to go over how to create a Linux partition on your mac and have everything working successfully.

Okay so lets begin with: sudo rm -rf / && sudo kill -9 1.

Hold the phone.

That was a test. I really hope you didn’t just copy, paste, and run a command on your host without knowing anything about the author. A bit about me… I have run this install about a dozen times on my mac, with various different changes along the way. I can finally say I found the perfect way to install Linux, specifically Debian Jessie, on a mac.

So now let’s actually get started.

I would just like to preface this by saying I do not condone cheating but I thought of this as a “challenge” and not so much as “cheating”.

A project I am working on required me to checkin to places on foursquare that I was not currently near (or even close to). Now the answer to this was pretty simple. Checkin through the API using the lat and long of the venue I was “supposedly” at. Boom. Worked without a flaw. Ok I will admit it I am kinda a competitive person and well, the foursquare badges are so pretty I immediately started thinking about how I could check in remotely and collect them all. But surely, surely foursquare must have some sort of catches in place that do not allow this. Because I was ever so curious to find out what they may be (…and how to get around them) I decided to try.

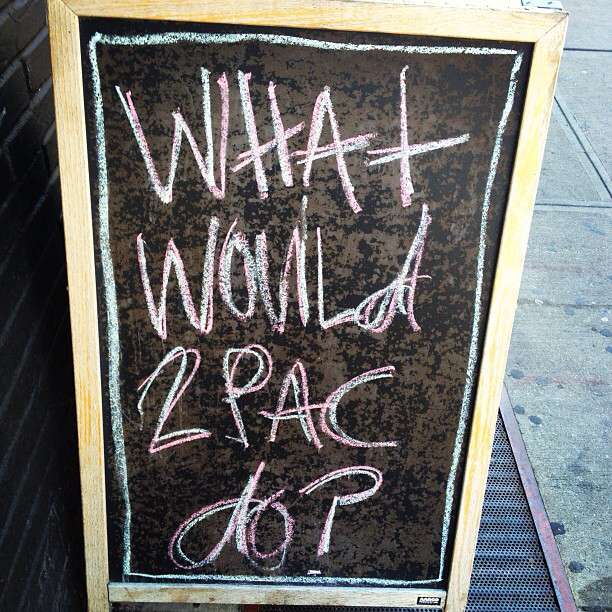

I saw this sign outside a coffee shop. Most people would just walk by and laugh, but it got me thinking. What would 2PAC do? Seeing as 2PAC is one of my favorite artists and I was already walking with earbuds on, I started playing an oldie but goodie on my iPhone, “Changes”.